Google Pixel phones have changed the world view of photography in phones, but this time the latest addition may be the biggest jump in the world of night photography as it created a situation called Night Sight, the latest development from Google combines the various techniques used in photography of machine language And artificial intelligence and algorithms, which in turn showed the best treatment of images in the low-light environment in smart devices.

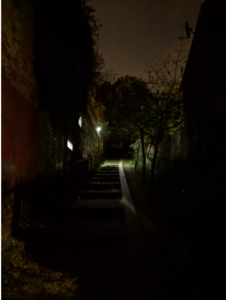

Let’s take a look at some of the photos, all the images that have been used Google Pixel XL3 in the filming and the signal was not used in the flash photography !.

The first picture is in the default mode while the second is when we do Night Night mode.

As we can see from the following images, Pixel 3 is one of the best devices that depict the low light beforehand, but the following shows the qualitative shift in this matter.

And from it the previous images are one of the best evidence of Google’s ability to process images.

The following images show Pixel 3’s ability to distinguish objects through photography and the use of machine language to get a clear and accurate picture, and from which you might ask how the images are using the flash in Pixel? The following images show the difference between the default mode and the default mode with the use of flash and Night Sight mode.

As we see Night Sight clearly outperforms the flash while the flash only shows nearby areas.

The company has not officially released the app yet, and may have many improvements before it is released, but you can download the Night Sight demo application from the XDA-Developers site.